Image Credits: OpenAI

Another breakthrough that will take the AI world by storm could be AI that generates 3D models. This week OpenAI Open-Source Point-E, a machine learning system that creates a 3D object based on a text prompt.

According to a paper published along with the codebase, Point-E can produce 3D models in one to two minutes on a single Nvidia V100 GPU.

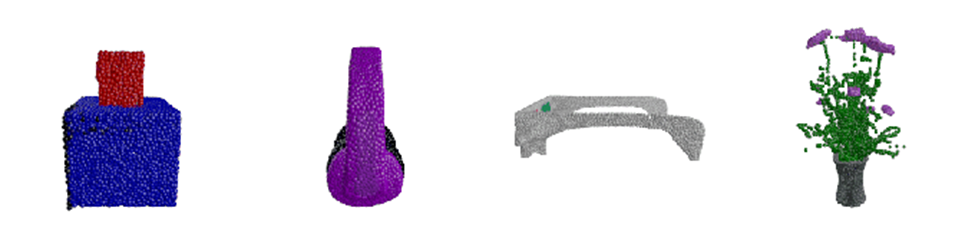

Point-E does not create 3D objects in the traditional sense. Rather, it generates point clouds, or discrete sets of data points in space, that represent a 3D shape—hence the cheeky acronym. (The “E” in Point-E stands for “efficiency” because it’s apparently faster than previous approaches to generating 3D objects.)

Point clouds are easier to synthesize computationally, but they don’t capture fine-grained shape or texture—currently the key limitation of Point- E.

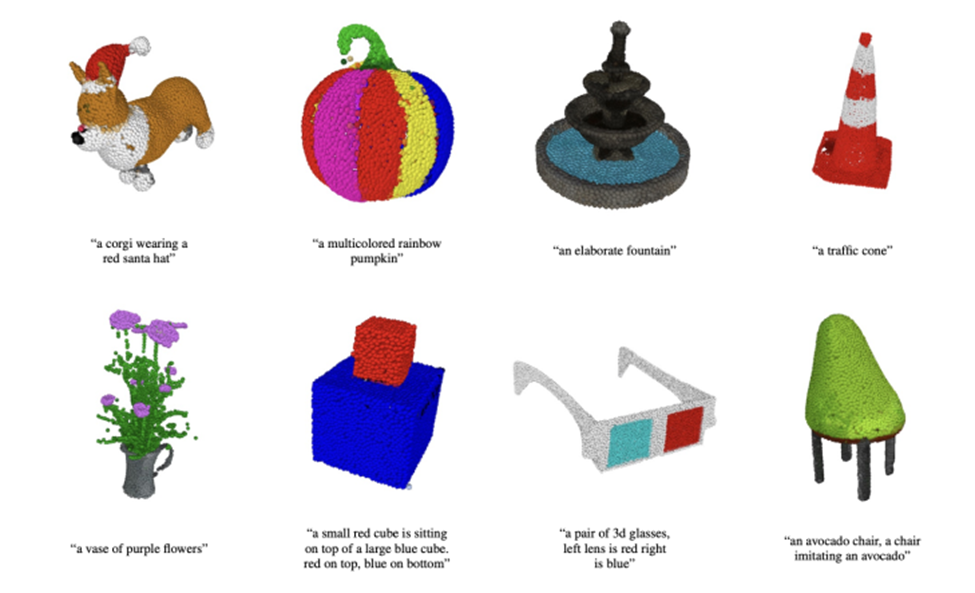

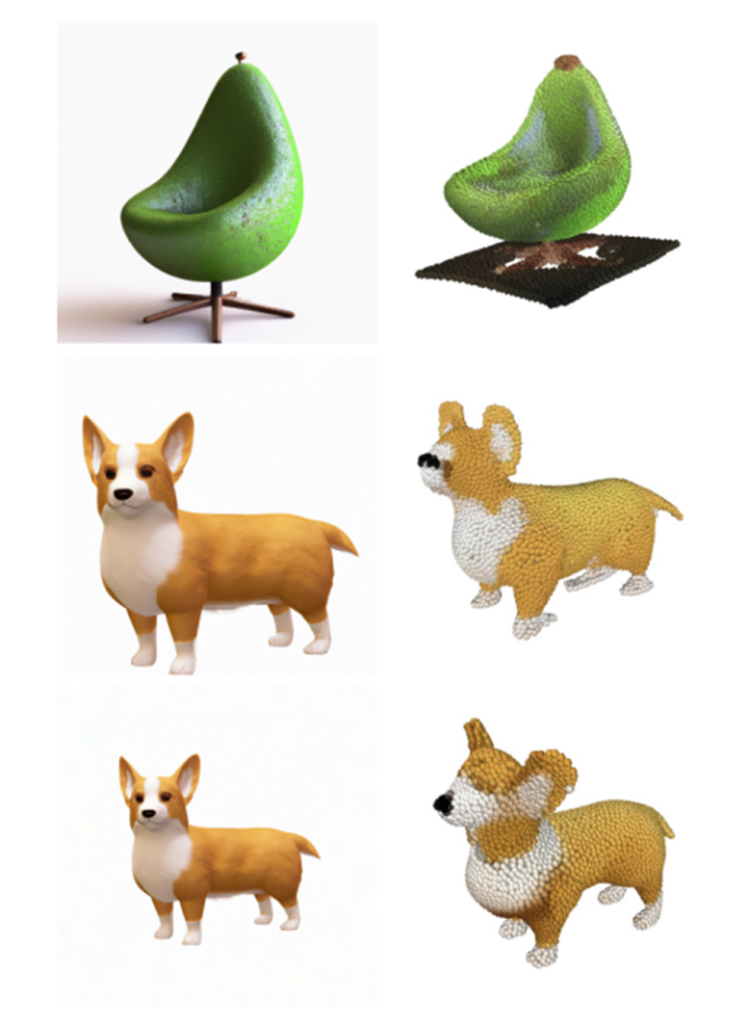

To get around this limitation, the Point-E team trained another AI system to convert Point-E point clouds into grids. (Meshes—the collection of vertices, edges, and faces that define an object—are commonly used in 3D modeling and design.)

However, they note in the paper that the model can sometimes miss certain parts of objects, resulting in boxy or distorted shapes.

Image Credits: OpenAI

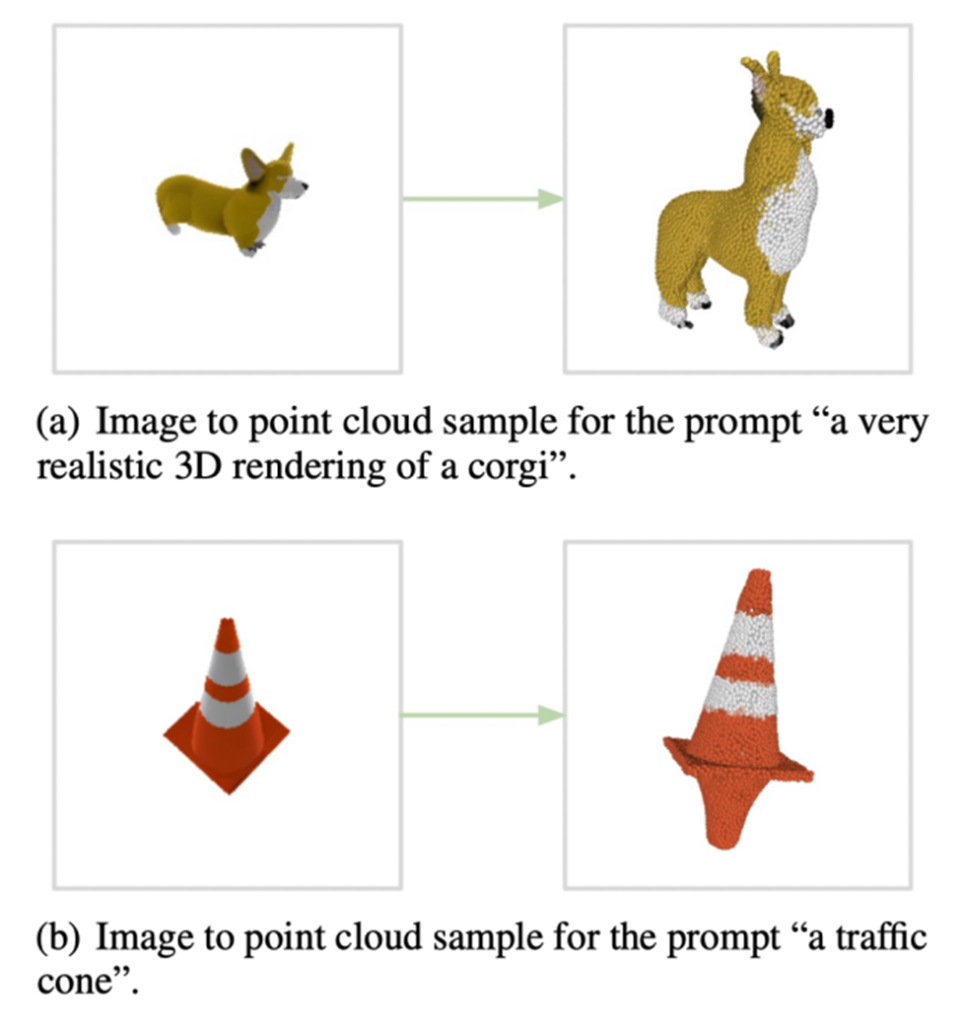

Apart from the stand-alone mesh generating model, Point-E consists of two models: the text-to-image model and the image-to-3D model. A text-to-image model, similar to generative art systems such as OpenAI’s own DALL-E 2 and Stable Diffusion, was trained on labeled images to understand associations between words and visual concepts.

On the other hand, the image-to-3D model was fed a set of images paired with 3D objects, so it learned to efficiently translate between the two.

AI that generates 3D models Future

When given a text prompt—for example, “3D printable gear, a single gear 3 inches in diameter and half an inch thick”—the Point-E text-to-image model generates a synthetic rendered object that is fed into the image-to-3D model. which subsequently generates a cloud of points.

After training models on a dataset of “several million” 3D objects and associated metadata, Point-E could produce colorful point clouds that often match text prompts, OpenAI researchers say. It’s not perfect – the Point-E image-to-3D model sometimes fails to understand the image from the text-to-image model, resulting in a shape that doesn’t match the text prompt.

Still, it’s orders of magnitude faster than the prior art—at least according to the OpenAI team.

Converting the Point-E point clouds into meshes.

Image Credits: OpenAI

“

Point-E failure cases.

While our method performs worse than state-of-the-art techniques in this evaluation, it produces samples in a small fraction of the time,” they wrote in the paper. “This could be more practical for certain applications or allow higher quality 3D object discovery.”

What are the applications exactly? OpenAI researchers point out that Point-E point clouds could be used to produce real-world objects, for example through 3D printing. With another mesh conversion model, the system could – once it’s a bit more polished – find its way into game development and animation workflows.

OpenAI may be the latest company to jump into the fray with a 3D object generator, but – as mentioned earlier – it’s certainly not the first. Earlier this year, Google released DreamFusion, an expanded version of Dream Fields, a generative 3D system that the company introduced back in 2021.

Unlike Dream Fields, DreamFusion requires no prior training, meaning it can generate 3D representations of objects without 3D data.

While all eyes are currently on 2D art generators, model-synthesizing artificial intelligence could be the next big industry disruptor. 3D models are widely used in film and television, interior design, architecture and various scientific fields.

Architectural firms use them, for example, to demonstrate proposed buildings and landscapes, while engineers use models as designs for new equipment, vehicles and structures.

4 thoughts on “AI that generates 3D models – Techiscience”

Comments are closed.